TL;DR – Common topics that come up when partners, specifically MSSPs, are testing Microsoft Sentinel features to evaluate its SIEM and SOAR capabilities. Part 3

This post is a part of series that covers various topics from the very basics, to ensure partners that may be familiar with other SIEMs, but that are not yet familiar with Azure can get all the information they need to be successful.

- Part 1 – MSSP Architecture Goal, Tenants and Subscriptions required for the POC, Required Permissions, and Azure Lighthouse.

- Part 2 – B2B or GDAP, CSPs, Where is the data?, Migrations, Which connectors?

- Part 3 – Agents and Forwarders, Sample Data, Storage Options, Repositories, Workspace Manager, Cross-workspace, and Other Resources. (this post)

Agents and Forwarders

This topic always comes up during POCs. Most partners are familiar with the Log Analytics agent, also known as the OMS or MMA agent. However, the Log Analytics agent will be retired August 2024. The new Azure Monitor Agent (AMA) is a consolidation of agents. It will replace the Log Analytics agent, as well as the Telegraf agent and the Diagnostics extension. And best of all, AMA supports Data Collection Rules (DCRs), which supports filtering of data during ingestion time, not just for agents, but also for other types of ingested data.

The Azure Monitor Agent (AMA) and Data Collection Rules (DCRs) also allow you to send different types of data to different Log Analytic Workspaces, so if you have performance related data that is not useful for security purposes, then you don’t need to send it to the workspace that is associated with Sentinel. You can use XPath queries to filter out the events.

Partners have various options to install the AMA agent including, VM extensions, Azure Policy, or the Windows installer. My preferred option is using Azure Policy via Defender for Cloud (MDC). This works for any server on any cloud or on-prem. From the Settings & monitoring menu for the specific subscription, you can configure Defender for Servers to deploy the AMA and the specific workspace that will be used, which in the case of a Sentinel POC should be the Sentinel workspace. I prefer this option, because if I add any new servers, then they will automatically get the agent.

And with agent questions come forwarder questions during the POC. Yes, partners can configure a forwarder for CEF via AMA connector and you can forward syslog data to the Sentinel workspace using the AMA. There is also a Content hub solution that include an

AMA migration tracker workbook.

Finally, if you are migrating from the Log Analytics agent to the AMA, there is also a script that removes the previous agent on all Azure VMs.

Sample Data

Another common question during POCs is how to generate sample data. There are various options partners can generate sample data on the workspaces acting as customer workspaces during the POC. I list some the options below:

- Microsoft Sentinel Training Lab – This is a solution available within Sentinel’s Content hub that is usually a great way to learn Sentinel, because it goes over the core features within Sentinel. I am including in the list because it does include a little bit of sample data. So, if you don’t need much for your POC, then please consider using this one.

- Microsoft Sentinel To-Go! – A community resource that also includes some sample data.

- Ingest Sample CEF data into Azure Sentinel – Microsoft Community Hub – This blog post is older, but the sample events provided are still very relevant. Personally, I prefer this option if I am testing a specific analytic rule because I can feed it the specific data that I need to trigger that rule.

- AttackIQ – This is another one of my preferred ones from our partner AttackIQ. I have this agent installed on my Azure VMs and AWS EC2s, in my case. I especially like this one when I need to generate sufficient incidents to simulate a multistage attack, a Fusion incident.

- There are additional simulation options with other Microsoft Security services. There are likely more than I am listing below, but these are the ones I’ve used:

- Alert validation in Microsoft Defender for Cloud | Microsoft Learn

- Deploy Microsoft Defender for Endpoint on Linux manually | Microsoft Learn (mde_linux_edr_diy.sh). This is useful if you have the Defender 365 connector installed.

- Simulating risk detections in Azure AD Identity Protection. This is useful if you have the Defender 365 connector installed.

- New ingestion-SampleData-as-a-service solution, for a great Demos and simulation – Microsoft Community Hub. This is useful if you have data elsewhere that you want to ingest to other workspaces.

Storage Options

This topic may not be of utmost importance during a POC, but some partners prefer to test their ability to take advantage of less expensive storage options during POCs, so I am including it here.

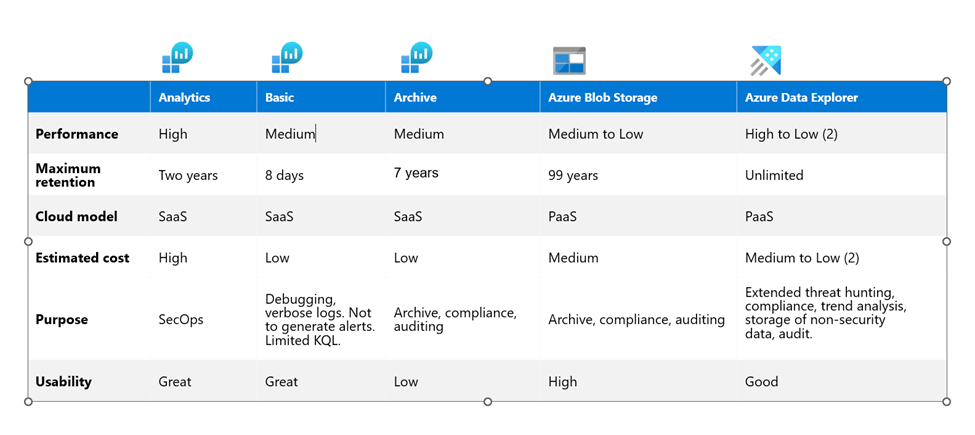

The default storage option for Sentinel ingestion is Analytics tables. The retention time of the tables can be adjusted for all tables within the workspace, or individually per table. Partners can also choose to use a different plan, which is called Basic and has significantly lower costs. Basic tables have an interactive retention of 8 days. There are some restrictions on Basic tables, such as not able to trigger alerts, as well as limited KQL commands. These tables are meant to be used with noisy logs, such as firewall logs or flow logs, that are normally used for debugging or troubleshooting purposes. Partners can also set a total retention for both Analytics and Basic tables, by configuring a total retention period, which will then move that data to Archive after the interactive retention period is over. The total retention is a maximum of 7 years. Partners can also use the Search feature to query and rehydrate data from Archive as needed.

But wait, there’s more! All those options I mentioned are available within Log Analytics Workspace, but there are also external storage options, such as Blob Storage and Azure Data Explorer (ADX). Partners can query Blob Storage using externaldata operator in KQL, which can also be used within an analytic rule. Additionally, ADX and Blob Storage can be combined, partners can create an external table in ADX that points to the whole container.

Here is a summary of the various storage options currently available:

Repositories

This feature is essential for MSSPs, so it has come up for every POC I’ve worked on. This feature allows partners to push content, such as analytic rules, hunting queries, workbooks, automation rules, playbooks, and parsers to customer workspaces. I covered this feature in detail in a blog post creatively named Sentinel Repositories. I also cover this topic in my Microsoft Sentinel Deep Dive session (1hr:34min mark). There is even a Sample Repository available for testing the Repositories feature. Future and existing automation features may also be combined with Repositories to allow MSSPs to manage the content that is published on their customers’ workspaces.

Update: Workspace Manager

Workspace Manager is a new feature that was recently introduced, which allows MSSPs to configure the MSSP workspace to act as the parent or grandparent of various other workspaces, typically customer workspaces. Workspace Manager still depends on Azure Lighthouse, it does not replace it. To be able to configure workspaces to be managed from a central MSSP workspace the Azure Lighthouse relationship must already exist. Using Workspace Manager an MSSP can publish content to the child workspaces. For the list of artifacts currently supported by Workspace Manager, please reference the documentation.

This feature allows MSSPs to group customer workspaces depending on their requirements. For example, healthcare customers may have different analytic rule and workbook requirements than education customers, so MSSPs can group them and then publish different content to each of those workspaces. Workspaces can be part of different groups, so you can publish content from different groups to the same workspace. In my opinion, an ideal MSSP solution would include a combination of both Repositories and Workspace Manager.

Cross-workspace

In the previous posts of this series, I covered how customers can delegate access to partners. I also covered the fact that artifacts can exist on both the MSSP workspace as well as the customer’s workspace. This makes it easier to keep some artifacts that are considered intellectual property within the MSSP workspace only. What I didn’t cover yet is how those artifacts can be used to access customers’ data. Here are the options:

- Multiple workspace incident view – This is a view that is available as soon as your customers delegate access to you using Azure Lighthouse or if you have multiple workspaces within your tenant.

- Cross workspace querying – Through the Logs blade you can query multiple workspaces using the workspace() expression and the union operator.

- Cross workspace analytic rules – Partners can create analytic rules that include up to 20 workspaces in the query. Keep in mind most analytic rules will run on the customer’s workspace, but you do have this option for cases where a cross workspace analytic rule is needed.

- Cross workspace workbooks – There are various Content hub solutions that include workbooks that can query data across workspaces and partners can also create their own. For example, the Incident Overview workbook and the Microsoft Sentinel Cost workbook that allow partners to view this data across their customers’ workspaces, as long as they have access.

- Cross workspace hunting – Similar to the querying through the Logs blade, partners can also save those queries to hunt later.

Other Resources

To close I want to include a list of links that we put together to help partners that are ramping up with Microsoft Sentinel. You can find that list of links here: https://aka.ms/SentinelLinks. That list is constantly being updated. It includes all sorts of information including training links to the various Ninja trainings, as well as additional links on more advanced subjects, such as UEBA, Fusion, SOAR automation, etc.

I hope this information is useful to all those MSSPs planning a Sentinel POC. As much as I tried to include everything I could think of, I am certain I forgot something. So, who knows maybe there will be a part 4? Until then, happy testing!

2 thoughts on “Sentinel POC – Architecture and Recommendations for MSSPs – Part 3”