TL;DR – Common topics that come up when partners, specifically MSSPs, are testing Microsoft Sentinel features to evaluate its SIEM and SOAR capabilities. Part 2

This post is a part of series that covers various topics from the very basics, to ensure partners that may be familiar with other SIEMs, but that are not yet familiar with Azure can get all the information they need to be successful.

- Part 1 – MSSP Architecture Goal, Tenants and Subscriptions required for the POC, Required Permissions, and Azure Lighthouse.

- Part 2 – B2B or GDAP, CSPs, Where is the data?, Migrations, Which connectors? (this post)

- Part 3 – Agents and Forwarders, Sample Data, Storage Options, Repositories, Workspace Manager, Cross-workspace, and Other Resources.

B2B or GDAP

In the previous post I focused on accessing resources that exist within a subscription. However, partners can also manage other security services that exist at tenant level, such as the entire suite of Microsoft Defender 365 services, including Defender for Endpoint, Defender for Cloud Apps (CASB), Defender for Identity, etc. For partners to access tenant level services, they will need tenant level access. This becomes more important when testing Sentinel because there are links provided to Microsoft Defender 365 from the incidents that involve any of those services. For those links to work as expected, the user must have access to those services.

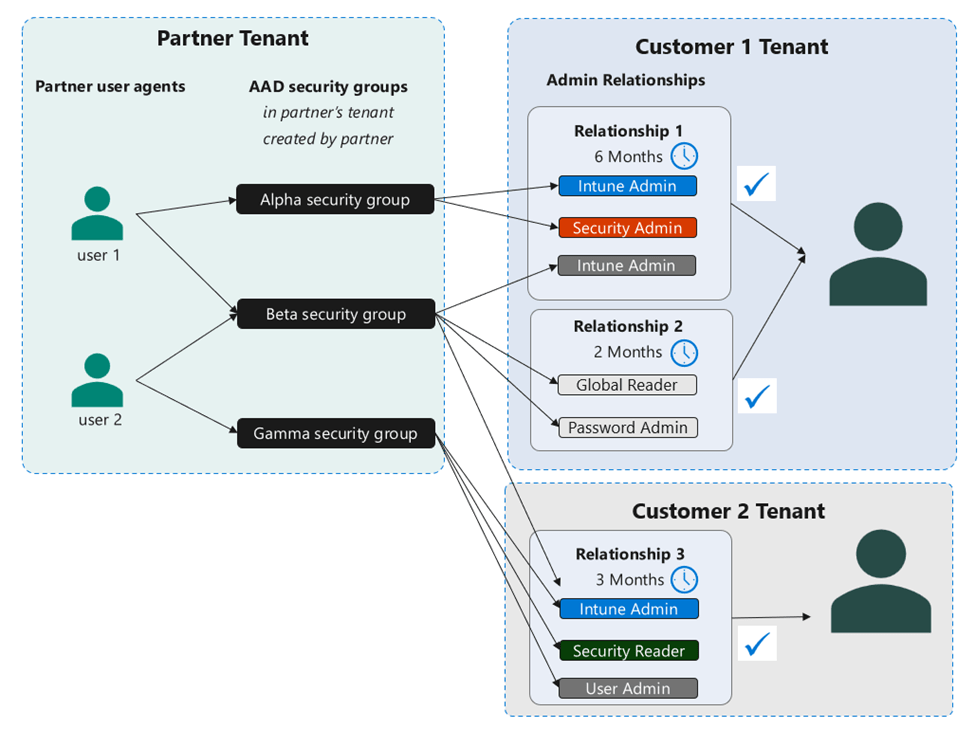

Both GDAP and B2B provide partners with the ability to access tenant level services for their customers. Most partners are already familiar with B2B, which is available for all tenants to invite guests into their tenant to collaborate. However, some partners, have either compliance or cybersecurity insurance requirements that prevent them from having an identity in their customers’ tenants. That’s where GDAP comes in. Granular Delegated Admin Privileges (GDAP) is available exclusively to Cloud Solution Providers (CSPs) and it is configured via Partner Center. GDAP allows partners to access customer resources in a secure manner and it doesn’t require the existence of an identity in the customer’s tenant. The diagram below depicts an example of GDAP delegations.

Partners performing a POC can choose to use either GDAP or B2B to access those tenants acting as customer tenants during the POC. For the Sentinel POC purposes, the access and the behavior of using GDAP vs B2B is equivalent. However, the ultimate goal for a production configuration should be to use GDAP, as well as implement the documented CSP best practices. If the requirement is to follow the same procedures during the POC that will be expected to be followed during go-live, then configuring GDAP is recommended. If the requirement is just to test Sentinel’s features for a period of time, then partners can just use B2B. For additional details, I recently delivered a session to partners on MSSPs & Azure Lighthouse, where I covered a few more details on GDAP, which I also mentioned briefly in my previous blog post MSSPs and Identity: Q&A.

More on CSPs

Partners that are Cloud Solution Providers (CSPs) can create subscriptions for customers. This is a great option to keep in mind because some customers migrating from legacy SIEM solutions may not have a security subscription. This may not be of importance during the POC, but it may be one of those scenarios that can also be tested, depending on the requirements of the POC. That subscription will then be billed through the partner, but it will still be associated with the customer’s tenant.

Where is the data?

As I mentioned previously in my blog posts, the customer’s security subscription, where Sentinel is onboarded, should always be associated with the customer’s tenant. Sentinel artifacts, such as analytic rules, workbooks, automation rules, playbooks, etc., can exist on either the MSSP workspace or the customer workspace. However, customer data should always be ingested into the customer’s workspace. This is very important to keep in mind during the POC, because features such as UEBA, and various connectors, such as Defender 365, will only work with the supported configuration, which depends on data being ingested into the customer’s workspace. There may be other types of data, such as Threat Intelligence (TI) data, that can be ingested into the MSSP tenant.

For more details on this topic and many other Sentinel MSSP topics, I highly recommend all partners to read (and re-read) the Microsoft Sentinel Technical Playbook for MSSPs.

Migrations

Most MSSPs performing a POC are currently using a legacy SIEM that they need to migrate from. Luckily, we have great migration documentation available. Microsoft Sentinel can even run side-by-side with legacy SIEM solutions, as described in the documentation.

For POCs specifically, a common concern is usually how are they going to convert all those existing rules to Sentinel KQL. I always tell them to focus on the data sources first, because many of the connectors are available as Content hub solutions, that means that not only is the connector available, but it also comes with other artifacts, such as analytic rules, workbooks, playbooks, etc. There are now over 250 solutions in the marketplace. It’s a matter of figuring out which data sources need to be covered during the POC and then, as the migration documentation states, mapping the rules within the legacy SIEM to the rules provided within the solution.

Partners can end up with some gaps, but not all the rules will have to be converted. In fact, there are also repositories, such as the unified Microsoft Sentinel and Microsoft 365 Defender repository, that have many artifacts available as well. And that’s not the only one, there are various other community repositories that have additional resources available. As I tell my partners, don’t reinvent the wheel, there is likely something out there that is either what you need or close enough that you can tweak it to be what you need. There are also tools that offer free Sigma rule translations, such as SOC Prime’s Uncoder.IO. And my guess is Security Copilot will also help with this. I can’t wait to play with it! 🙂

Finally, as partners go through the POC sometimes they come up with their own solutions, it is useful to know that they can publish them to the marketplace.

Which connectors?

We get the connectors question quite frequently, but especially for POCs. Partners want to know which connectors they should configure and test during a Sentinel POC. The answer is always ‘it depends’ :). It really does depend on what compliance regulations or internal security requirements or internal priorities the partner must comply with.

There are many options to ingest data into Microsoft Sentinel. You can also find a list of connectors in the documentation. Even if you don’t see a connector for your solution, keep in mind that some connectors, such as syslog and CEF can support a variety of data sources. We always say that we haven’t met the data source we haven’t been able to ingest yet. It’s always a matter of figuring out the best way to ingest it. A great way to visualize the options is the diagram below.

Finally, there is a Zero Trust solution in Content hub that also has some recommended data connectors, and it sorts them from Foundational, to Basic, to Intermediate, to Advanced. Here are those referenced in the solution:

| Foundational Data Connectors |

| Azure Activity |

| Azure Active Directory |

| Office 365 |

| Microsoft Defender for Cloud |

| Network Security Groups |

| Windows Security Event (AMA) |

| DNS |

| Azure Storage Account |

| Common Event Format (CEF) |

| Syslog |

| Amazon Web Services (AWS) |

| Basic Data Connectors |

| Microsoft 365 Defender |

| Azure Firewall |

| Windows Firewall |

| Azure WAF |

| Azure Key Vault |

| Intermediate Data Connectors |

| Azure Information Protection |

| Dynamics 365 |

| Azure Kubernetes Service (AKS) |

| Qualys Vulnerability Management |

| Advanced Data Connectors |

| Azure Active Directory Identity Protection |

| Threat Intelligence TAXII |

| Microsoft Defender Threat Intelligence |

| Microsoft Defender for IoT |

Considerations:

- Free data – Yes! Some data is always free, please reference the official list of free data sources.

- Microsoft Sentinel benefit for Microsoft 365 E5, A5, F5 and G5 customers – Customers that are currently paying for those licenses can receive a “a data grant of up to 5MB per user/day to ingest Microsoft 365 data”. For information on the Sentinel benefit, please review the information here and Rod Trent’s blog post on locating the free benefit.

- Ingestion Time Transformation – Many tables also support ingestion time transformation, which is another way to reduce costs. Partners can use ingestion time transformations to filter out data that is not useful for security analysis. There is also a library of transformations available. If you want to see some examples, please reference my blog post on disguising data. As you can see in my post, you can also use these transformations to mask data.

- Commitment Tiers – Previously known as Capacity Reservations, this is another way to reduce costs, as much as 65% compared to pay-as-you-go.

- Storage Options – See the Storage Options section in Part 3.

In Part 3 of this series of posts, I cover the following topics: Agents and Forwarders, Sample Data, Storage Options, Repositories, Cross-workspace, and Other Resources.

2 thoughts on “Sentinel POC – Architecture and Recommendations for MSSPs – Part 2”