TL;DR – Sentinel automation playbooks using the OpenAI Logic App connector.

A few of my partners have been brainstorming ways to integrate OpenAI with Microsoft Sentinel, so I set out to do my own research (read: playing). I read a few blogs where people were using the OpenAI connector to update comments and even add incident tasks, which was impressive and inspiring! However, I wanted to go a little further. I had two main goals during my initial testing:

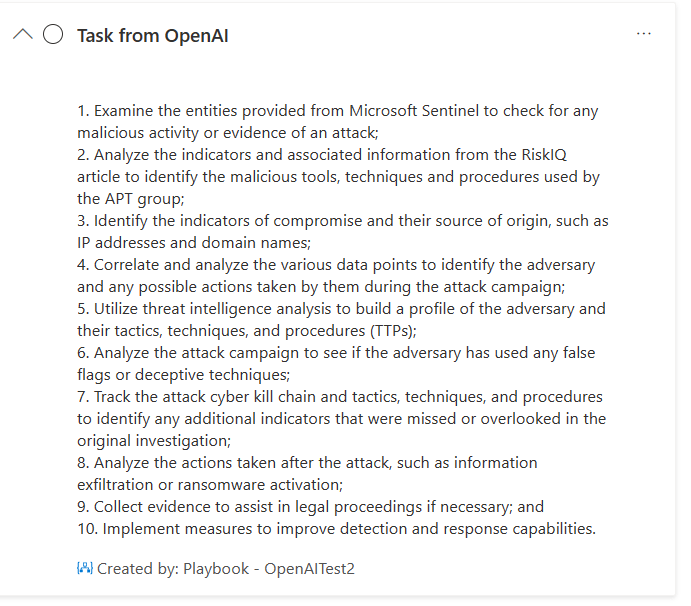

- I wanted to separate the tasks. The original testing I saw being done with Sentinel was inspiring and impressive, but the steps that OpenAI recommended were all together in one task. I wanted to separate them into distinct tasks because that’s one of the great qualities about this tasks feature, being able to see the progress of those tasks as they are completed.

- I wanted to update additional information within the incident, not just update comments and add tasks. Specifically, I wanted to adjust the severity depending on the information that OpenAI was able to provide. And I wanted to add a tag that noted this incident had been updated by OpenAI.

By the way, I am using two connectors, The Microsoft Sentinel Logic App and the OpenAI Logic App connectors. Please note, when using the OpenAI Logic App connector, the key needs to be entered as “Bearer YOUR_API_KEY“, as noted in the documentation. Otherwise, you will get a 401 error.

A quick warning before you continue reading, this blog post is about testing what is possible, which may not be perfectly accurate or ideal for production scenarios at this time.

Separating the tasks

My initial goal was to separate the tasks. This is what I mean by separating the tasks. When I followed the steps from the initial blogs I read, I could see the tasks were being added into one task, as shown below.

So, this is how I separated the tasks. First, my prompt tells OpenAI specifically that I am looking for 3 steps and that I *only* want to see the first step, and I’ll let it know when I am ready for the next steps. I am only using the Incident Description in the prompt, but you can probably add additional information, such as the title, entities, tactics, techniques, etc.

So, once I get the output from that first step, I then feed it to “Add task to incident action“, and I name that step “Task no.1 from OpenAI“. Notice that I am passing it within a “For each” container, the reason for this is because I really just need the text within it, otherwise I’ll get some of the other information that comes with the output, and it just doesn’t look pretty. 🙂

And then I add similar actions for the next steps. However, this time the prompt says ‘Ready for the 2nd step…“

And finally, the last step in my test.

Now, when I run my playbook, it ends up adding separate tasks that look like this.

Now instead of having all the steps in one task, I get separate tasks that an analyst can now check to complete, which would allow me to see the progress as the tasks are completed.

I still have some challenges because the behavior from the ChatGPT UI is not quite the same as when I use the API, but I was able to make it work using the prompts noted above. There’s probably more work to be done in that area.

Updating additional information

I covered the items on the left branch of my Logic App above. Now, let’s move to the right branch.

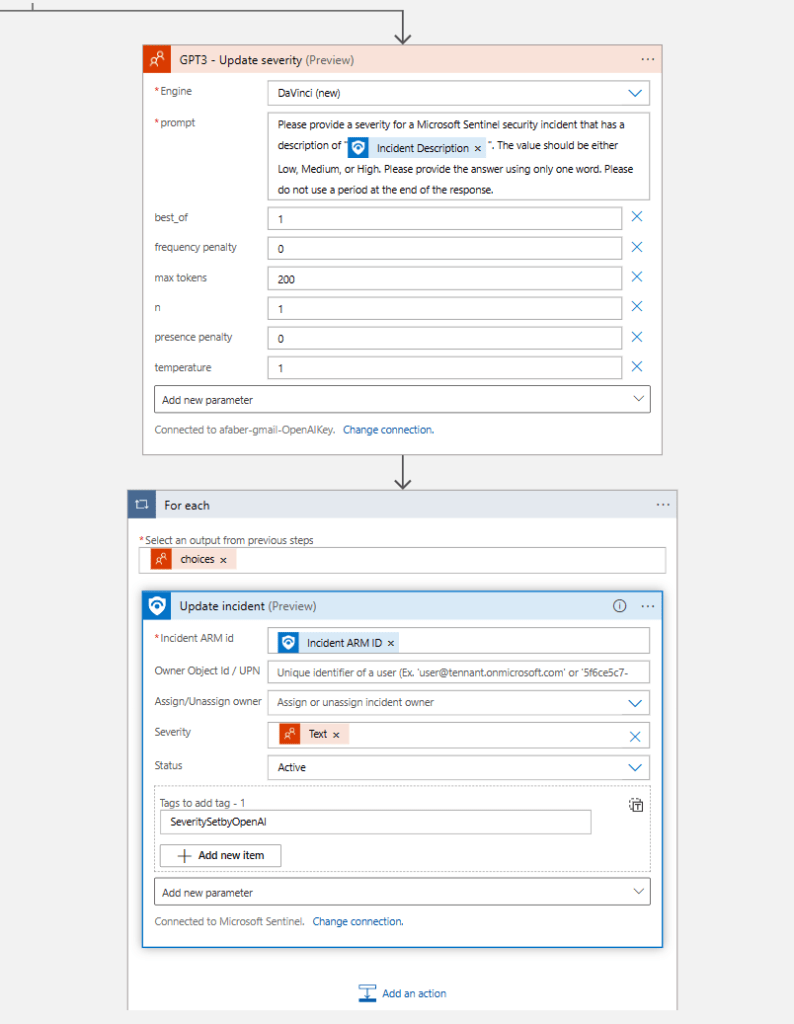

I am getting information from OpenAI on what the severity for my incident should be for this type of incident. Again, for my test this is based on Incident Description, but you can probably add additional information, such as the title, entities, tactics, techniques, etc.

And again, I am passing the out within a “For each” container, because I really just need the text within, and it wouldn’t work if I just use choices, because the format of the severity attribute would not be correct. Additionally, I am also adding a tag to highlight that the severity has been set according to the information received from OpenAI. Finally, I am also updating the status to ‘Active‘, this is really just to make my testing easier.

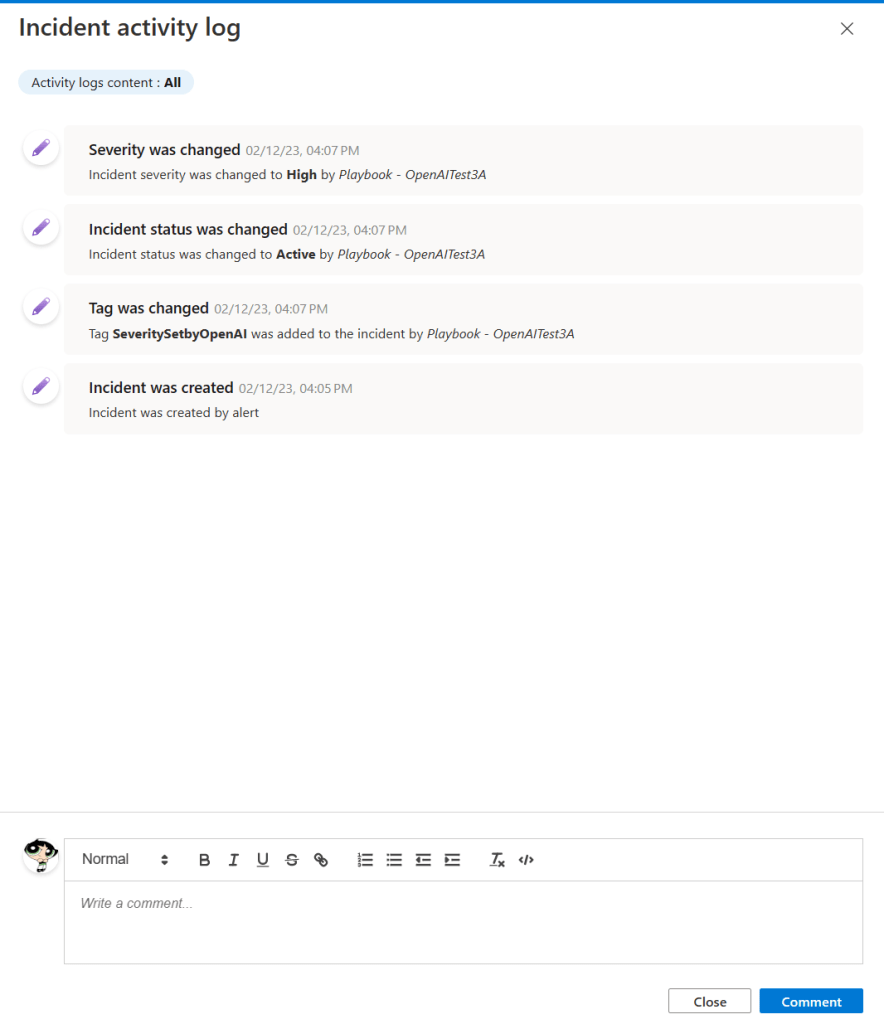

So, when I run the playbook, I can see in my activity log that the severity and status have been updated, and the tag was added, as shown below.

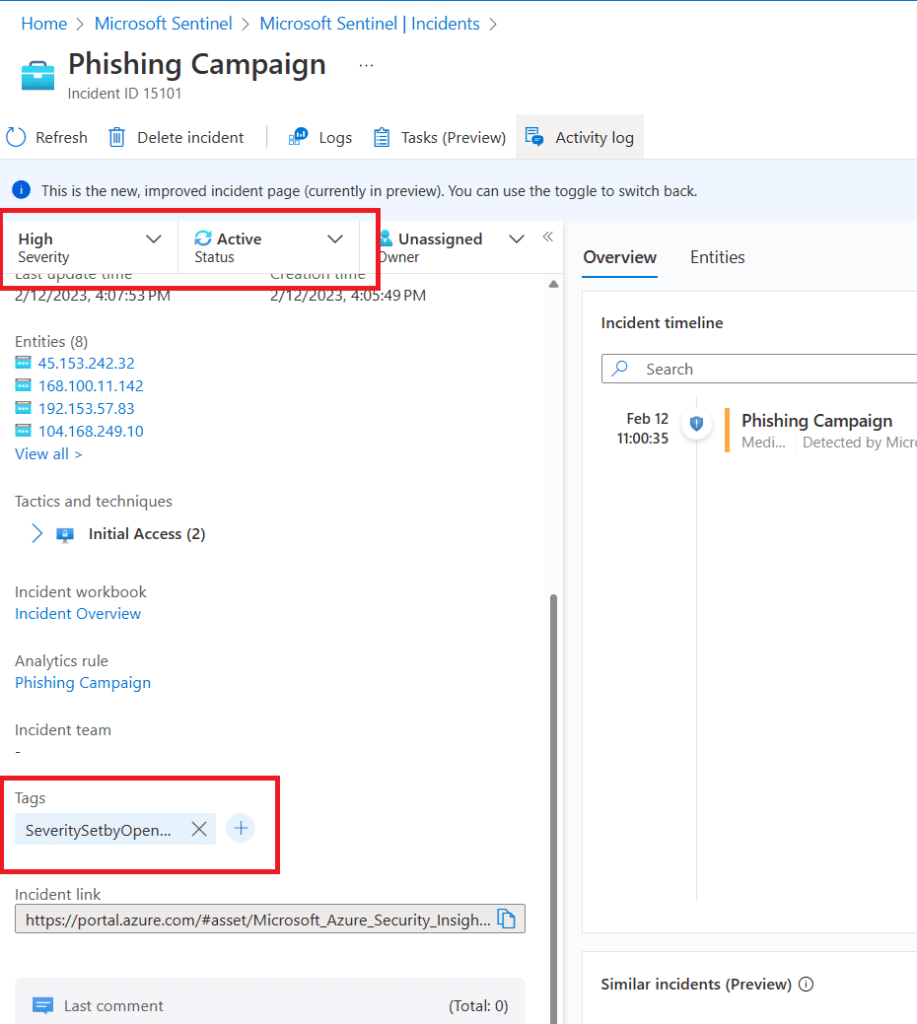

And I can see them updated as well.

Final thoughts

If you want this playbook to trigger automatically, don’t forget to assign your playbook’s managed identity the Microsoft Sentinel Responder Role. You can do this within Logic Apps, as shown below.

And if you need your SOC analysts to do this for customers, check out my previous blog post on this topic.

I am just getting started testing this integration, but I can already see the potential it has to help SOC analysts. This specific scenario may not be the right playbook for common incidents, but it may provide a head start with uncommon incidents. As usual, I hope this blog post is useful and I hope it sparks some ideas about how to use these features for your own requirements.

Fantastic stuff. Does the GPT that is integrated with Sentinel have current Internet access? In other words could I incorporate elements like “What is the VirusTotal score for $sha256” or “What is Symantec’s category for $remoteurl”?

LikeLiked by 1 person

If the entity is associated with the incident, which you would configure via the analytic rule, then you can use the entities dynamic content value. Here is the list of supported entities: https://learn.microsoft.com/en-us/azure/sentinel/entities#supported-entities

LikeLike